Ollama

Overview

Ollama is a cross-platform inference framework client (MacOS, Windows, Linux) designed for seamless deployment of large language models (LLMs) such as Llama 2, Mistral, Llava, and more. With its one-click setup, Ollama enables local execution of LLMs, providing enhanced data privacy and security by keeping your data on your own machine.

Dify supports integrating LLM and Text Embedding capabilities of large language models deployed with Ollama.

Configure

1. Download Ollama

Visit Ollama download page to download the Ollama client for your system.

2. Run Ollama and Chat with Llava

After successful launch, Ollama starts an API service on local port 11434, which can be accessed at .

For other models, visit Ollama Models for more details.

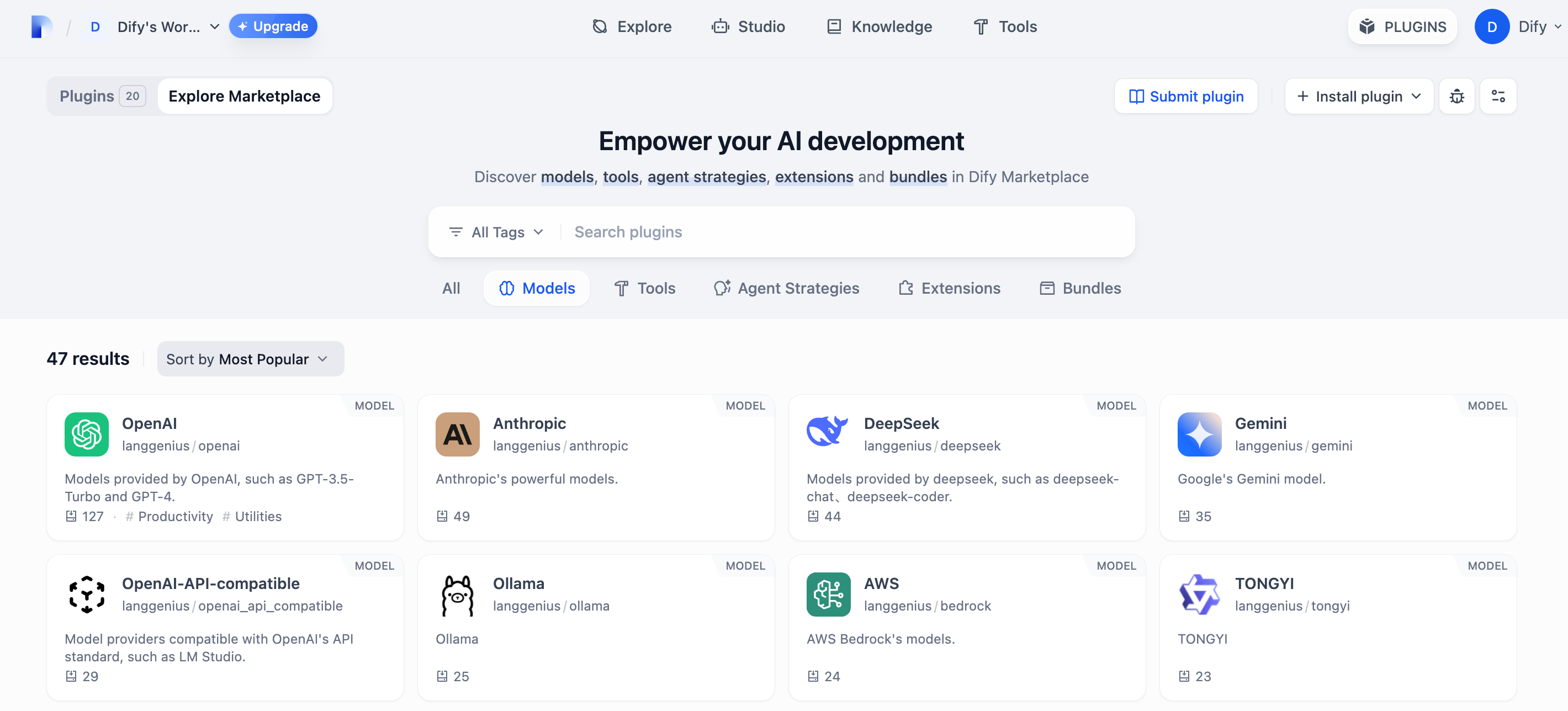

3. Install Ollama Plugin

Go to the Dify marketplace and search the Ollama to download it.

4. Integrate Ollama in Dify

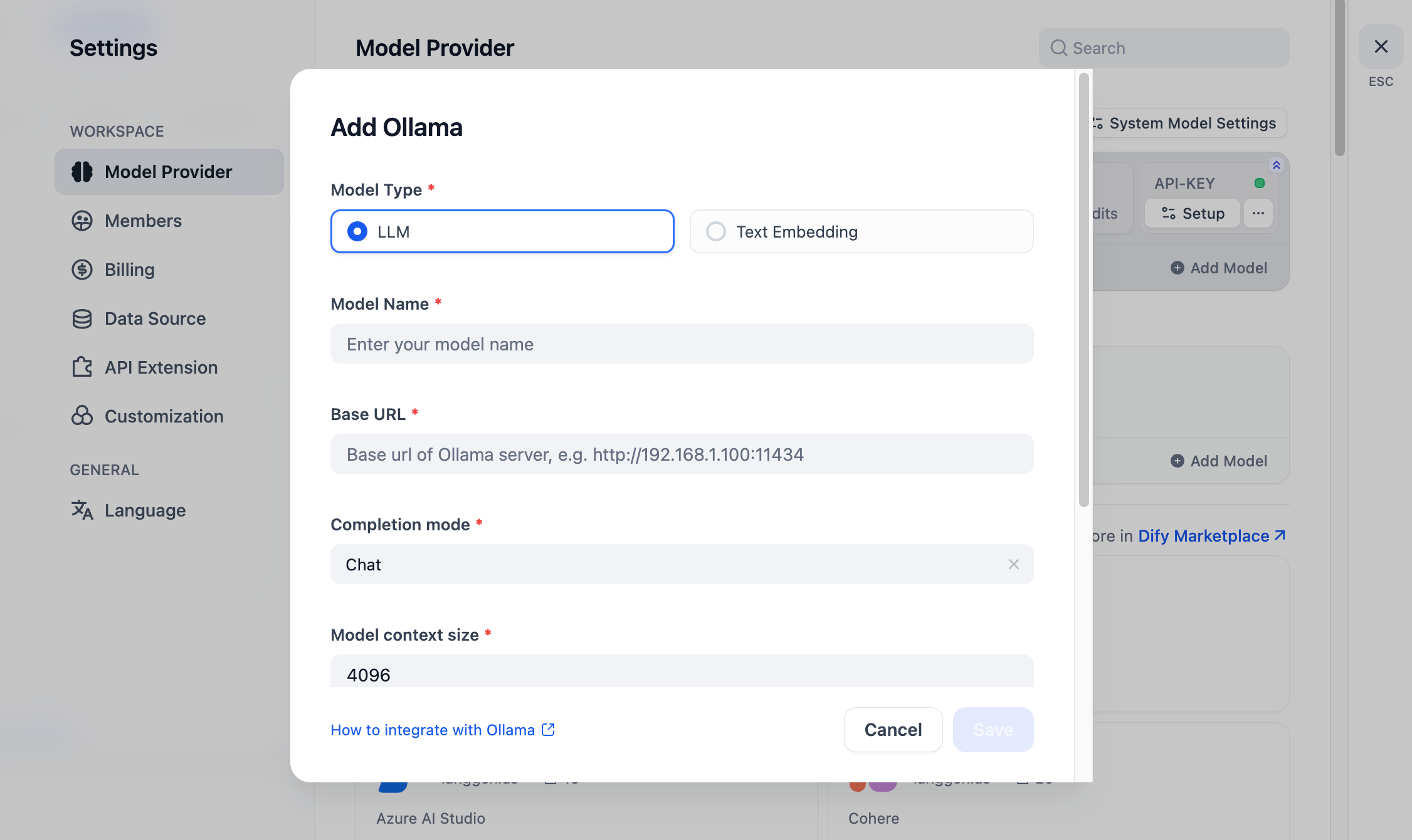

In , fill in:

- Model Name:

- Base URL:

- Enter the base URL where the Ollama service is accessible.

- If Dify is deployed using Docker, consider using the local network IP address, e.g., or to access the service.

- For local source code deployment, use .

- Model Type:

- Model Context Length:

- The maximum context length of the model. If unsure, use the default value of 4096.

- Maximum Token Limit:

- The maximum number of tokens returned by the model. If there are no specific requirements for the model, this can be consistent with the model context length.

- Support for Vision:

- Check this option if the model supports image understanding (multimodal), like .

Click "Save" to use the model in the application after verifying that there are no errors.

The integration method for Embedding models is similar to LLM, just change the model type to Text Embedding.

For more detail, please check Dify's official document.

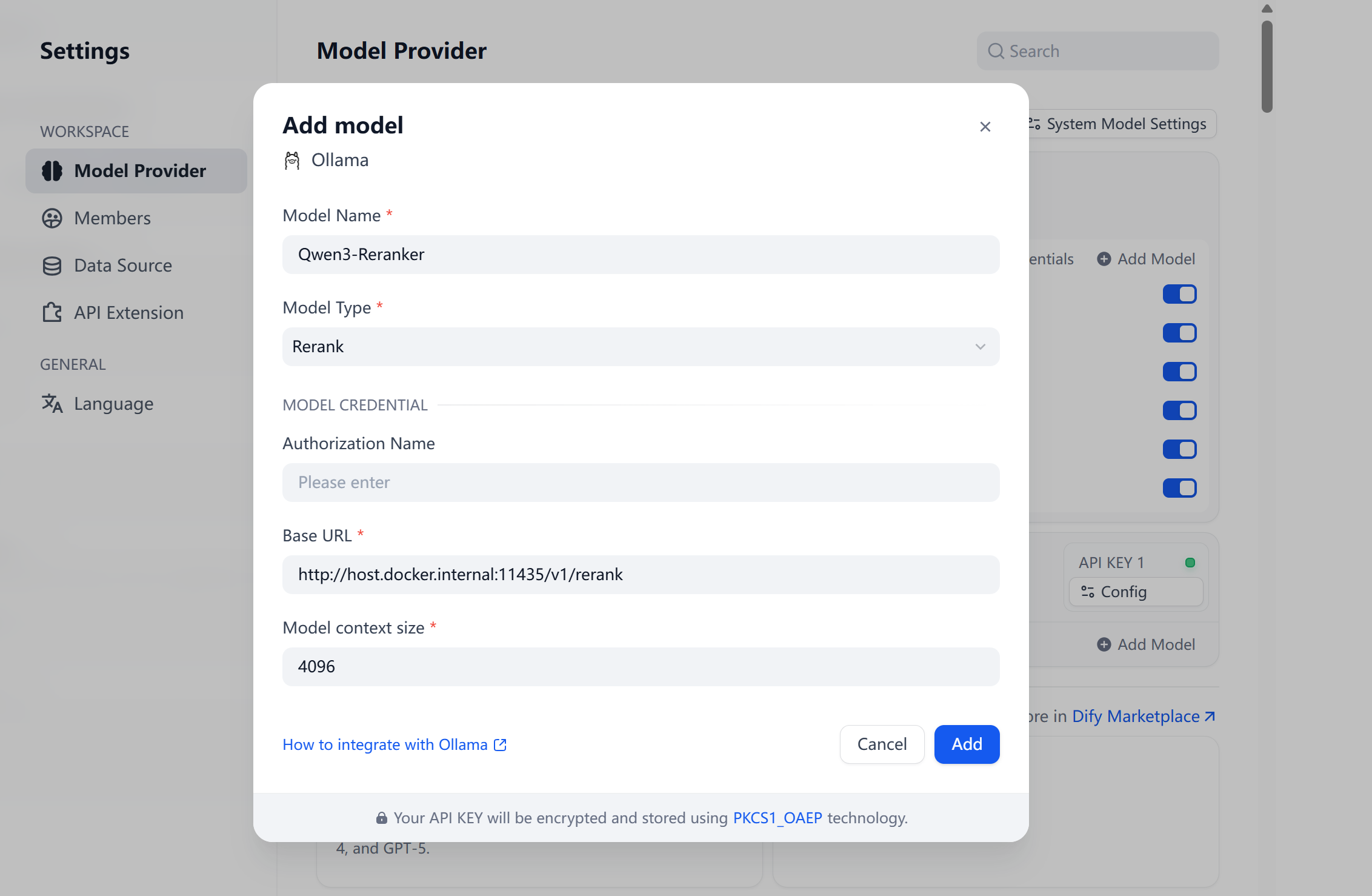

5. Integrate Ollama Rerank in Dify

Hint: ollama officially does not support rerank models, please try locally deploying tools like vllm, llama.cpp, tei, xinference, etc., and fill in the complete URL ending with "rerank". Deployment reference llama.cpp deployment tutorial for Qwen3-Reranker

In , fill in:

- Model Name:

- Base URL:

- The plugin appends if the URL doesn't end with . For other services like , provide the full endpoint URL, e.g., .

- If Dify is deployed using Docker, consider using a local network IP address, e.g., or or to access the service.

- Model Type:

- Model Context Length:

Click "Add" to use the model in the application after verifying that there are no errors.